Yesterday I detailed an incompatible breakage with RDS MySQL Aurora 3.06.0, and one option stated is to upgrade to the just released 3.07.0.

Turns out that does not work. It is not possible to upgrade any version of AWS RDS MySQL Aurora 3.x to 3.07.0, making this release effectively useless.

3.06.0 to 3.07.0 fails

$ aws rds modify-db-cluster --db-cluster-identifier $CLUSTER_ID --engine-version 8.0.mysql_aurora.3.07.0 --apply-immediately An error occurred (InvalidParameterCombination) when calling the ModifyDBCluster operation: Cannot upgrade aurora-mysql from 8.0.mysql_aurora.3.06.0 to 8.0.mysql_aurora.3.07.0

3.06.0 to 3.06.1 succeeds

Sometimes you need to be on the current point release of a prior version.

3.06.0 to 3.07.0 fails

$ aws rds modify-db-cluster --db-cluster-identifier $CLUSTER_ID --engine-version 8.0.mysql_aurora.3.07.0 --apply-immediately An error occurred (InvalidParameterCombination) when calling the ModifyDBCluster operation: Cannot upgrade aurora-mysql from 8.0.mysql_aurora.3.06.1 to 8.0.mysql_aurora.3.07.0

There is no upgrade path

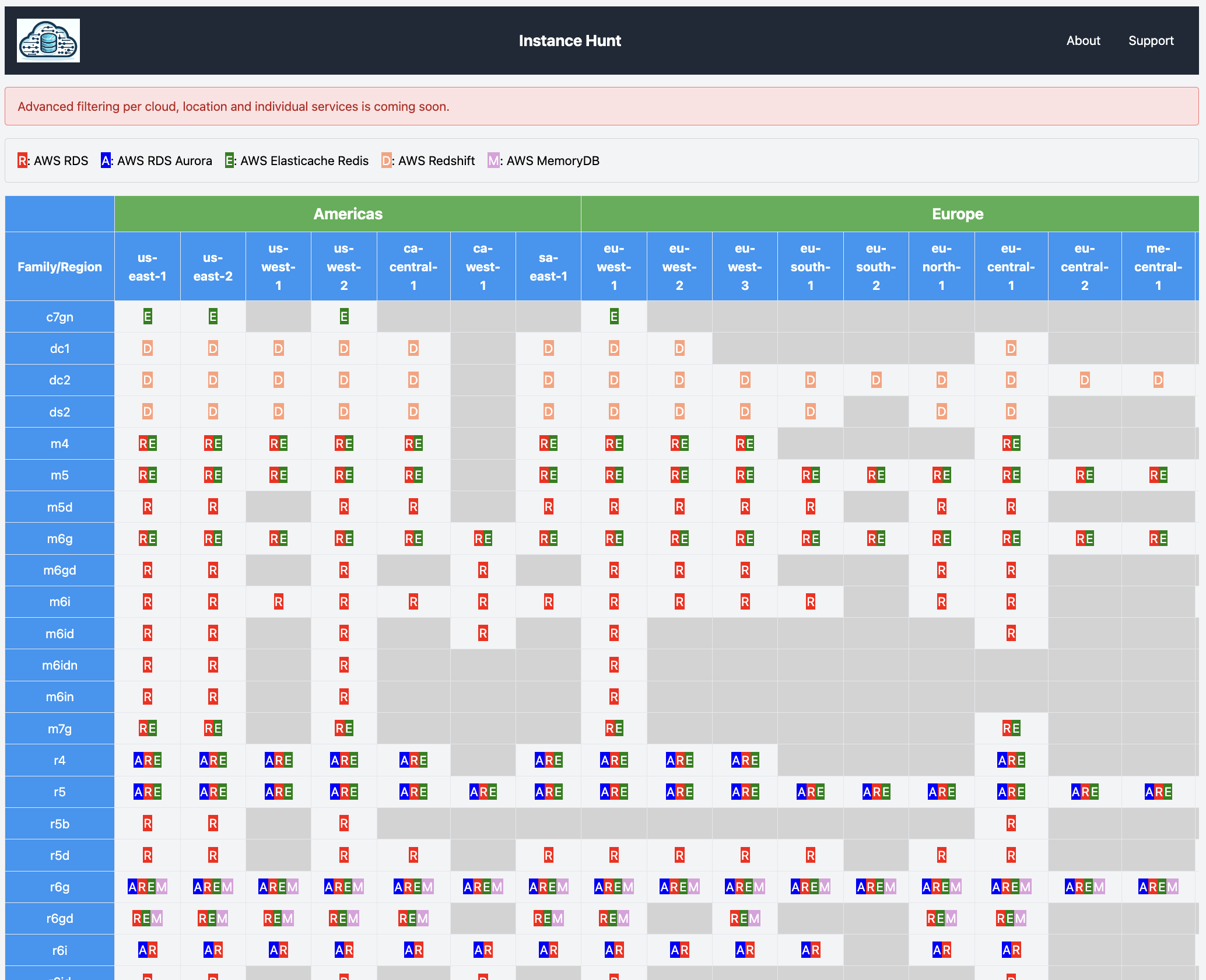

You can look at all valid ValidUpgradeTarget for all versions. There is in-fact no version that can upgrade to AWS RDS Aurora MySQL 3.07.0.

Seems like a common test pattern overlooked.

$ aws rds describe-db-engine-versions --engine aurora-mysql

...

{

"Engine": "aurora-mysql",

"Status": "available",

"DBParameterGroupFamily": "aurora-mysql8.0",

"SupportsLogExportsToCloudwatchLogs": true,

"SupportsReadReplica": false,

"DBEngineDescription": "Aurora MySQL",

"SupportedFeatureNames": [],

"SupportedEngineModes": [

"provisioned"

],

"SupportsGlobalDatabases": true,

"SupportsParallelQuery": true,

"EngineVersion": "8.0.mysql_aurora.3.04.1",

"DBEngineVersionDescription": "Aurora MySQL 3.04.1 (compatible with MySQL 8.0.28)",

"ExportableLogTypes": [

"audit",

"error",

"general",

"slowquery"

],

"ValidUpgradeTarget": [

{

"Engine": "aurora-mysql",

"IsMajorVersionUpgrade": false,

"AutoUpgrade": false,

"Description": "Aurora MySQL 3.04.2 (compatible with MySQL 8.0.28)",

"EngineVersion": "8.0.mysql_aurora.3.04.2"

},

{

"Engine": "aurora-mysql",

"IsMajorVersionUpgrade": false,

"AutoUpgrade": false,

"Description": "Aurora MySQL 3.05.2 (compatible with MySQL 8.0.32)",

"EngineVersion": "8.0.mysql_aurora.3.05.2"

},

{

"Engine": "aurora-mysql",

"IsMajorVersionUpgrade": false,

"AutoUpgrade": false,

"Description": "Aurora MySQL 3.06.0 (compatible with MySQL 8.0.34)",

"EngineVersion": "8.0.mysql_aurora.3.06.0"

},

{

"Engine": "aurora-mysql",

"IsMajorVersionUpgrade": false,

"AutoUpgrade": false,

"Description": "Aurora MySQL 3.06.1 (compatible with MySQL 8.0.34)",

"EngineVersion": "8.0.mysql_aurora.3.06.1"

}

]

},

Image generated by ChatGPT. Mistakes left as a reminder genAI is not there yet for text.

Image generated by ChatGPT. Mistakes left as a reminder genAI is not there yet for text.