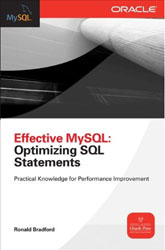

Announced on Sunday at Oracle Open World 2011 is the release of the Effective MySQL book series starting with the “Optimizing SQL Statements” title. The goal of the Effective MySQL series is a highly practical, concise and topic specific reference providing applicable knowledge to use on each page. A feedback comment provided today was “no fluff” which is great comment to re-enforce the practical nature of the series.

Announced on Sunday at Oracle Open World 2011 is the release of the Effective MySQL book series starting with the “Optimizing SQL Statements” title. The goal of the Effective MySQL series is a highly practical, concise and topic specific reference providing applicable knowledge to use on each page. A feedback comment provided today was “no fluff” which is great comment to re-enforce the practical nature of the series.

Details on the Effective MySQL Optimizing SQL Statements page include a sample chapter, code downloads and purchase links for print and e-books at Amazon, McGraw-Hill and Barnes & Noble.