I have recently been working with the Donors Choose Open Data Set which happens to be in PostgreSQL. Easy enough to install and load the data in PostgreSQL, however as I live and breath MySQL, lets load the data into MySQL.

And here is where start our discussion, first some history.

SQL History

SQL – Structure Query Language is a well known common language for communicating with Relational Databases (RDBMS) . It is not the only language I might add, having both used many years ago and just mentioned QUEL at a Looker Look and Tell event in New York. It has also been around since the 1970s making it; along with C; one of oldest in general use programming languages today.

SQL became an ANSI standard in 1986, and an ISO standard in 1987. The purpose of a standard is to provide commonality when communicating or exchanging information; in our case; a programming language communicating with a RDBMS. There have been several iterations of the standard as functionality and syntax improves. These are commonly referred to as SQL-86, SQL-89, SQL-92, SQL:1999 , SQL:2003 , SQL:2006 , SQL:2008 and SQL:2011 .

And so, with SQL being a standard it means that what we can do in PostgreSQL should translate to what we can do in MySQL.

SQL Communication

Both products provide a Command Line Interface (CLI) client tool for SQL communication, mysql for MySQL and psql for PostgreSQL. No surprises there. Both use by default the semicolon ; as a SQL statement terminator, and both CLI tools use \q as a means to quit and exit the tool. Certainly not a standard but great for syntax compatibility.

DDL Specification

Our journey begins with defining tables.

DROP TABLE

Both products SQL syntax support DROP TABLE. Infact, both support the DROP TABLE [IF EXISTS] syntax.

DROP TABLE donorschoose_projects; DROP TABLE IF EXISTS donorschoose_projects;

CREATE TABLE

Both support CREATE TABLE.

Both support defining columns in the typical format <column_name> NOT NULL attribute. Talking about specific datatypes for columns is a topic on its own and so I discuss this later.

The PostgreSQL syntax was a table option WITHOUT OIDS which is not valid in MySQL. It is also obsolescent syntax in PostgreSQL 9.3. From the PostgreSQL manual “This optional clause specifies whether rows of the new table should have OIDs (object identifiers) assigned to them. The default is to have OIDs. Specifying WITHOUT OIDS allows the user to suppress generation of OIDs for rows of a table. This may be worthwhile for large tables … Specifying WITHOUT OIDS also reduces the space required to store the table on disk by 4 bytes per row of the table, thereby improving performance.”

In this example as this is just for testing, dropping the WITHOUT OIDS syntax creates a mutually compatible syntax.

Comments

Both MySQL and PostgreSQL support -- as an inline comment in an SQL statement. No need to strip those out.

ALTER TABLE

Both support ALTER TABLE ADD CONSTRAINT syntax which in our example is used to define the PRIMARY KEY, however while the syntax remains the same, the choice of datatype affects the outcome.

The following works in both products when the datatype is CHARACTER(32). More about CHARACTER() later.

ALTER TABLE donorschoose_projects ADD CONSTRAINT pk_donorschoose_projects PRIMARY KEY(_projectid);

In our example dataset, the primary key is defined with a TEXT datatype, and in MySQL this fails.

ERROR 1170 (42000): BLOB/TEXT column '_projectid' used in key specification without a key length

As the data in the dataset for primary keys by further analysis is indeed a 32 byte hexadecimal value, this is changed to CHARACTER(32) to be compatible for this data loading need. This however is an important key difference in any migration process with other data sets.

Side Note

Both products support the definition of the PRIMARY KEY in the CREATE TABLE syntax two different ways.

CREATE TABLE demo_pk1 (id character(32) NOT NULL PRIMARY KEY); CREATE TABLE demo_pk2 (id character(32) NOT NULL, PRIMARY KEY(id));

CREATE INDEX

Both use CREATE INDEX syntax however with our sample dataset, this is the first observed difference in syntax with provided sample SQL statements.

PostgresSQL

CREATE INDEX projects_schoolid ON projects USING btree (_schoolid);

MySQL In both products The following data types are defined in the PostgreSQL example data set. Each is discussed to identify a best fit in MySQL. For reference: This data type is for a fixed width character field and requires a length attribute. MySQL supports The following showing both variants is valid in both products. While this dataset does not use these datatypes, they are critical in the general conservations of character (aka string) types. This refers to a variable length string. While In PostgresSQL, In MySQL however Of all the complexity of this example dataset, the general use of PostgreSQL uses the And just to note the boundary of this data type. The difference is in out-of-bounds value management, and here MySQL defaults suck. You can read my views at DP#4 The importance of using sql_mode

. While not in this dataset, both support the And for reference, both products support Each product has additional integer data types. For a fixed-precision number, PostgreSQL uses This leads to a side-bar discussion on knowing your data-types for your product. In a recent interview for a MySQL Engineer, a candidate (with SQL Server experience) provided a code example defining the NUMERIC datatype. I knew it was technically valid in MySQL syntax, but never actually seen this in use. When I asked the candidate for what was the syntax commonly used for a fixed-precision datatype they were unable to answer. This dataset does not include these data types, however for reference, PostgresSQL uses Both PostgreSQL and MySQL use the Both PostgreSQL and MySQL use the In PostgresSQL, Using PostgresSQL A key difference in column definition is the PostgreSQL SQL:1999 calls for a Boolean datatype, and both PostgreSQL and MySQL support defining a column as BOOLEAN. MySQL however implicitly converts this to a SIGNED TINYINT, and any future DDL viewing shows this reference. When referencing boolean, in PostgreSQL Only the data types in this example have been reviewed. In our sample SQL script, there is The loading of data with the Finally, the Both PostgreSQL and MySQL have an ANALYZE command, however the syntax is different, with the required PostgresSQL MySQL MySQL has an At the MySQL BR Conference 2025 I had the opportunity to speak about Improving Your MySQL Security Posture. You can find a copy of my slides on my Presentations , and a Portugese (Brazil) translation. Last week I had the opportunity to speak at the MySQL and Heatwave Summit in San Francisco.

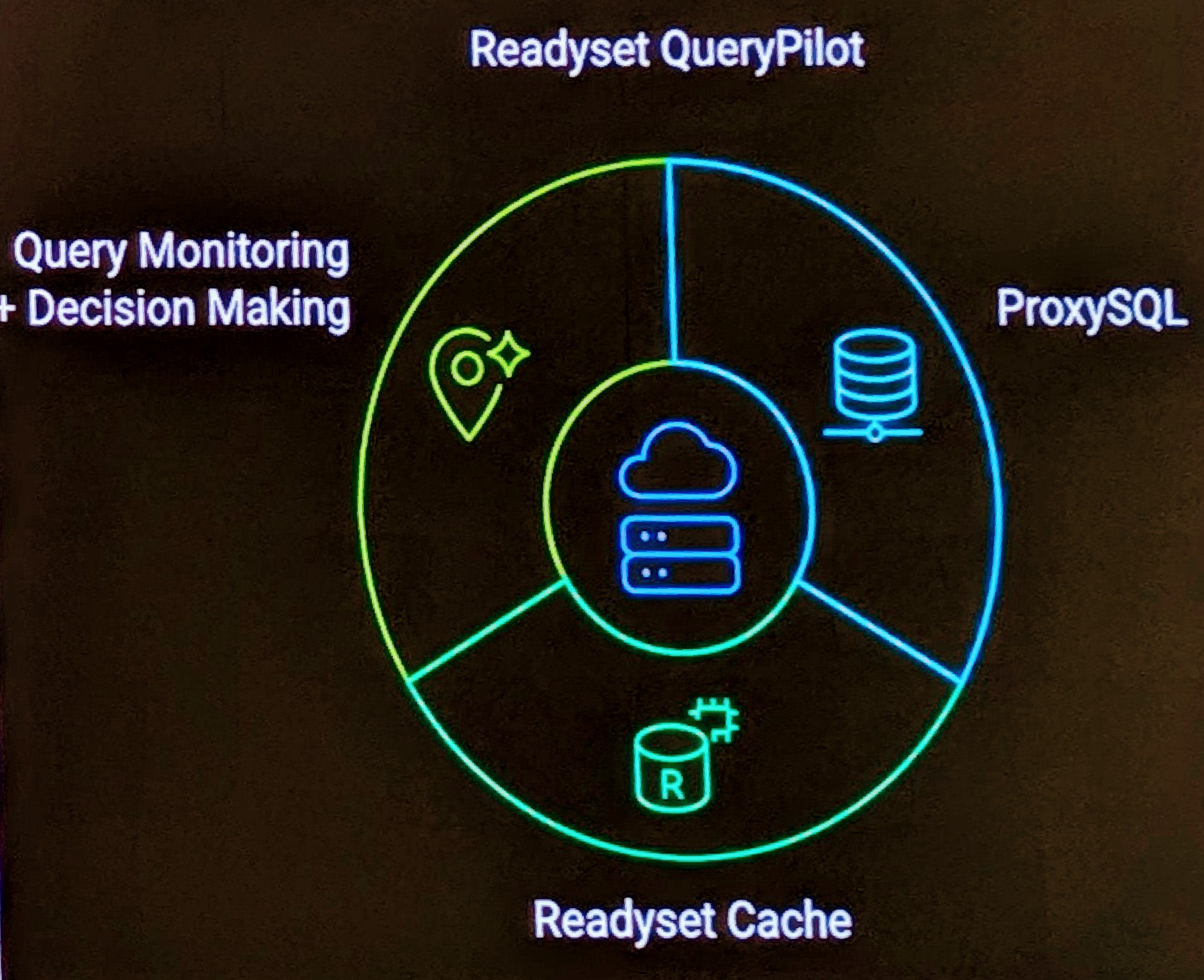

I discussed the impact of the new MySQL 8.0 default caching_sha2_password authentication, replacing the mysql_native_password authentication that was the default for approximately 20 of the 30 years that MySQL has existed. At the MySQL and Heatwave Summit 2025 today, Readyset announced a new data systems architecture pattern named Readyset QueryPilot . This architecture which can front a MySQL or PostgreSQL database infrastructure, combines the enterprise-grade ProxySQL and Readyset caching with intelligent query monitoring and routing to help support applications scale and produce more predictable results with varied workloads.

The USING .

CREATE INDEX USING btree projects_schoolid ON projects (_schoolid);

USING btree is an optional syntax (for minimum compatibility) purposes so removing this provides a consistency.Data Types

character

CHARACTER(n) syntax for compatibility, however generally CHAR(n) is the preferred syntax. Indeed, PostgreSQL also supports CHAR(n).CREATE TABLE demo_character(c1 CHARACTER(1), c2 CHAR(1));

varchar/character varying

character varying is not a valid MySQL syntax, varchar is compatible with both products.CREATE TABLE demo_varchar(vc1 VARCHAR(10));

text

text is used for variables of undefined length. The maximum length of a field is 1GB as stated in the FAQ

.TEXT only stores 2^16 characters (64K). The use of LONGTEXT is needed to support the full length capacity in PostgeSQL. This store 2^32 characters (~4GB).text will be the most difficult to modify to a more applicable VARCHAR or TEXT datatype when optimizing in MySQL.integer

integer datatype for a signed 4 byte integer value. MySQL supports the same syntax, however generally prefers to refer to the shorter INT syntax. Both products support both overall.mysql> CREATE TABLE demo_integer(i1 INTEGER, i2 INT);

Query OK, 0 rows affected (0.11 sec)

mysql> INSERT INTO demo_integer VALUES (1,-1);

Query OK, 1 row affected (0.05 sec)

mysql> SELECT * FROM demo_integer;

+------+------+

| i1 | i2 |

+------+------+

| 1 | -1 |

+------+------+

1 row in set (0.00 sec)

demo=# CREATE TABLE demo_integer(i1 INTEGER, i2 INT);

CREATE TABLE

demo=# INSERT INTO demo_integer VALUES (1,-1);

INSERT 0 1

demo=# SELECT * FROM demo_integer;

i1 | i2

----+----

1 | -1

(1 row)

mysql> TRUNCATE TABLE demo_integer;

Query OK, 0 rows affected (0.04 sec)

mysql> INSERT INTO demo_integer VALUES (2147483647, -2147483648);

Query OK, 1 row affected (0.04 sec)

mysql> SELECT * FROM demo_integer;

+------------+-------------+

| i1 | i2 |

+------------+-------------+

| 2147483647 | -2147483648 |

+------------+-------------+

1 row in set (0.00 sec)

demo=# TRUNCATE TABLE demo_integer;

TRUNCATE TABLE

demo=# INSERT INTO demo_integer VALUES (2147483647, -2147483648);

INSERT 0 1

demo=# SELECT * FROM demo_integer;

i1 | i2

------------+-------------

2147483647 | -2147483648

(1 row)

demo=# TRUNCATE TABLE demo_integer;

TRUNCATE TABLE

demo=# INSERT INTO demo_integer VALUES (2147483647 + 1, -2147483648 - 1);

ERROR: integer out of range

demo=# SELECT * FROM demo_integer;

i1 | i2

----+----

(0 rows)

mysql> TRUNCATE TABLE demo_integer;

Query OK, 0 rows affected (0.04 sec)

mysql> INSERT INTO demo_integer VALUES (2147483647 + 1, -2147483648 - 1);

Query OK, 1 row affected, 2 warnings (0.07 sec)

mysql> SELECT * from demo_integer;

+------------+-------------+

| i1 | i2 |

+------------+-------------+

| 2147483647 | -2147483648 |

+------------+-------------+

1 row in set (0.00 sec)

bigint data type. While the PostgreSQL docs

indicate bigint is 8 bytes, testing with PostgresSQL 9.3 failed. Something to investigate more later.demo=# CREATE TABLE demo_bigint(i1 BIGINT);

CREATE TABLE

demo=# INSERT INTO demo_bigint VALUES (2147483647 + 1), (-2147483648 - 1);

ERROR: integer out of range

mysql> CREATE TABLE demo_bigint(i1 BIGINT);

Query OK, 0 rows affected (0.12 sec)

mysql> INSERT INTO demo_bigint VALUES (2147483647 + 1), (-2147483648 - 1);

Query OK, 2 rows affected (0.04 sec)

Records: 2 Duplicates: 0 Warnings: 0

mysql> SELECT * from demo_bigint;

+-------------+

| i1 |

+-------------+

| 2147483648 |

| -2147483649 |

+-------------+

2 rows in set (0.01 sec)

smallint, a 2-byte integer.numeric

numeric but supports decimal.It would not be surprising to know that MySQL uses DECIMAL and for compatibility supports NUMERIC.real/double precision

real for 4 bytes, and double precision for 8 bytes. MySQL uses float for 4 bytes, and double for 8 bytes. MySQL however supports both PostgreSQL syntax options, however PostgreSQL supports float, but not double.demo=# CREATE TABLE demo_floatingpoint(f1 FLOAT, f2 REAL, d1 DOUBLE, d2 DOUBLE PRECISION);

ERROR: type "double" does not exist

LINE 1: ...TE TABLE demo_floatingpoint(f1 FLOAT, f2 REAL, d1 DOUBLE, d2...

demo=# CREATE TABLE demo_floatingpoint(f1 FLOAT, f2 REAL, d2 DOUBLE PRECISION);

CREATE TABLE

mysql> CREATE TABLE demo_floatingpoint(f1 FLOAT, f2 REAL, d1 DOUBLE, d2 DOUBLE PRECISION);

Query OK, 0 rows affected (0.07 sec)

date

date data type.timestamp

timestamp data type to store date/time values. However, there is a difference in both precision and implementation here.timestamp supports a date before EPOCH, while in MySQL it does not. MySQL uses the DATETIME datatype.timestamp and MySQL DATETIME, both support microsecond precision. MySQL however only started to provide this in MySQL 5.6

.timestamp without time zone syntax, used in our example dataset. Analysis of data loading will determine the impact here.boolean

WHERE column_name = TRUE or WHERE column_name = t retrieves a true value. In MySQL WHERE column_name = TRUE or WHERE column_name = 1. When you SELECT a boolean, in PostgresSQL the answer is ‘t’, in MySQL, the answer is 1.demo=# CREATE TABLE demo_boolean (b1 boolean);

CREATE TABLE

demo=# INSERT INTO demo_boolean VALUES (TRUE),(FALSE);

INSERT 0 2

demo=# SELECT * FROM demo_boolean;

b1

----

t

f

(2 rows)

mysql> CREATE TABLE demo_boolean (b1 boolean);

Query OK, 0 rows affected (0.11 sec)

mysql> INSERT INTO demo_boolean VALUES (TRUE),(FALSE);

Query OK, 2 rows affected (0.03 sec)

Records: 2 Duplicates: 0 Warnings: 0

mysql> SELECT * FROM demo_boolean;

+------+

| b1 |

+------+

| 1 |

| 0 |

+------+

2 rows in set (0.00 sec)

Other Data Types

Other syntax

psql specific syntax to show a debugging line with \qecho .... For compatibility these are removed.\COPY <table_name> FROM PSTDIN WITH CSV HEADER is PostgreSQL specific and so loading the data is a future topic.VACUUM ANALYZE <table_name> command is also PostgreSQL specific

and removed. This is a means effectively of optimizing and analyzing the table.TABLE keyword in MySQL.ANALYZE donorschoose_projects;

ANALYZE TABLE donorschoose_projects;

ERROR: syntax error at or near "table"

ANALYZE donorschoose_projects;

ERROR 1064 (42000): You have an error in your SQL syntax;...

ANALYZE TABLE donorschoose_projects;

OPTIMIZE TABLE syntax, however while technically valid syntax this is not compatible with the default storage table InnoDB.mysql> OPTIMIZE TABLE donorschoose_projects;

+----------------------------+----------+----------+-------------------------------------------------------------------+

| Table | Op | Msg_type | Msg_text |

+----------------------------+----------+----------+-------------------------------------------------------------------+

| test.donorschoose_projects | optimize | note | Table does not support optimize, doing recreate + analyze instead |

| test.donorschoose_projects | optimize | status | OK |

+----------------------------+----------+----------+-------------------------------------------------------------------+

2 rows in set (0.32 sec)

Tagged with:

Databases

MySQL

PostgreSQL

Technologies

Related Posts

Improving your MySQL Security Posture Presentation

MySQL and Heatwave Summit Presentation

Readyset QueryPilot Announcement