I am now sitting in on MySQL and Search at Craigslist by Jeremy Zawodny at MySQL Users Conference

Some of the technical difficulties that required addressing.

- High churn rate

- half life can be very short

- Growth

- Traffic

- Need to archive postings, e.g. 100M but be searchable

- Internationalization and UTF-8

Some of the Craigslist Goals

- Open Source

- Easy and approachable

- be green with energy use

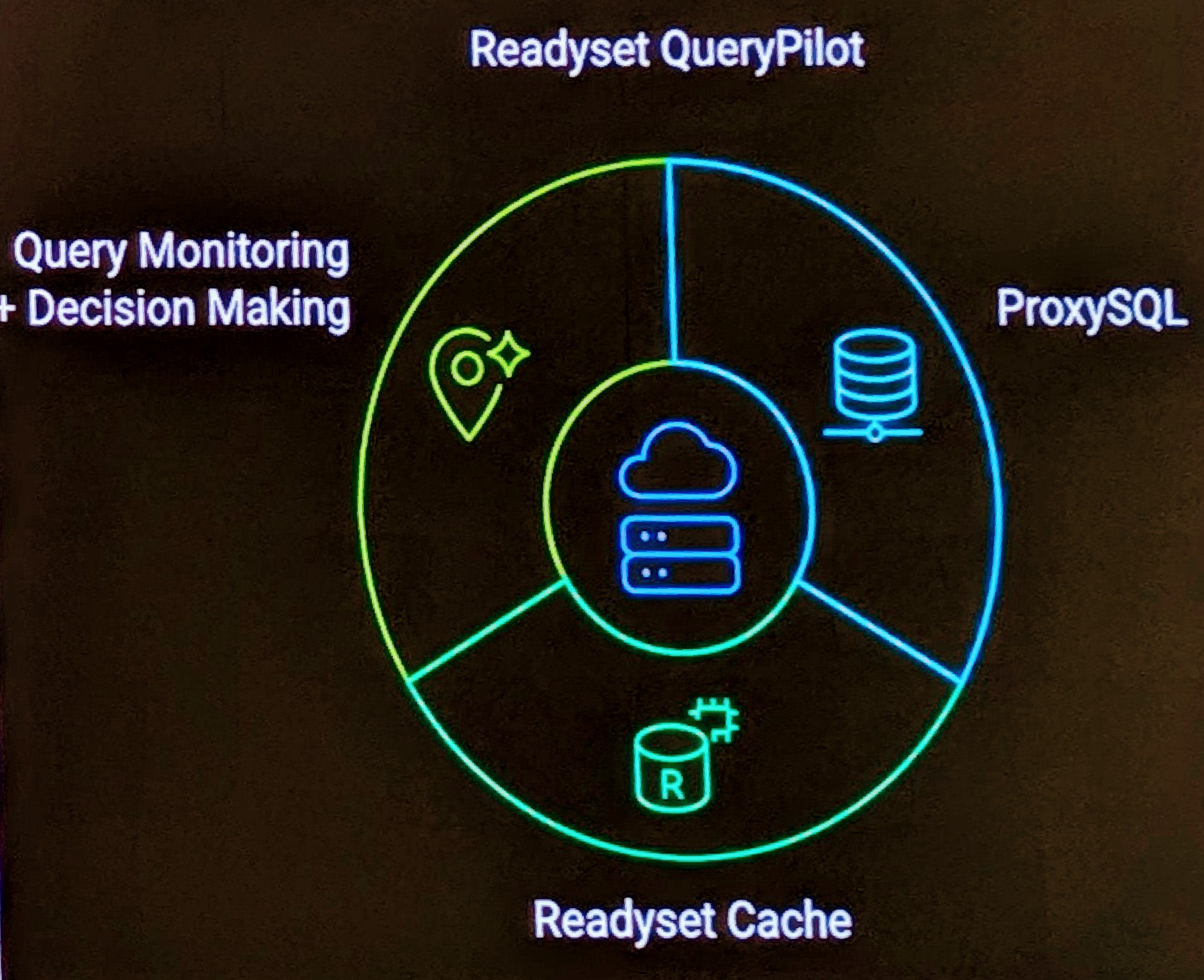

A review of the Internals server configuration

- Load Balancer (perlbal like)

- Read Proxy Array (perl+memcached)

- Web Read Array (apache 1.3 + mod_perl)

- Object Cache (Perl + memcached)

- Read DB Cluster (MySQL 5.0.x)

- Search Cluster (Sphinx)

Clusters of DB servers have good vertical partitioning by Roles. These being

- Users

- Classified

- Forums

- Stats

- Archive

Sphinx is a full standalone full text search that is used. Did compare with Apache Solr, but it seemed more complex and complicated. The Sphinx configuration:

- Partitioned based on cities (people search locally)

- Attributes v Keywords

- Persistent Connections

- Minimal stopword list

- Partition in 2 clusters (1 master, 4 slaves)

The results of implementing Sphinx were:

- decrease in 25 MySQL boxes to 10 sphinx boxes

- no locking

- 1,000+ qps

- 50M queries per day

- Better separation of code