For any production MySQL Database system, running RAID is a given these days. Do you know what RAID your database is? Are you sure?. Ask for quantifiable reproducible output from your systems provider or your System Administrator.

As a consultant I don’t always know the specific tools for the clients deployed H/W, but I ask the question. On more the one occasion the actual result differed from the clients’ perspective or what they were told, and twice I’ve discovered that clients when asked if their RAID was running in a degraded mode, it actually was and they didn’t know.

You can read about various benchmarks at MySQL blogs such as BigDBAHead and MySQL Performance Blog however getting first hand experience of your actually RAID configuration, the H/W and S/W variables is critical to knowing how your technology works. You can then build on this to run your own benchmarks.

Over 50% of my clients run on DELL equipment, most using local storage or shared storage options such as Dell MD1000, Dell MD3000, NetApps or EMC. I’ve had the opportunity to spend a few days looking into the more details of RAID, specifically the DELL PERC 5/i Raid Controllers, and I’ve started a few MySQL Cheatsheets for my own reference that others may also benefit from.

Understanding PERC RAID Controllers gives an overview of using the MegaCLI tools to retrieve valuable information on the Adapter, Physical Drives, Logical Drives and the all important Battery Backed Cache.

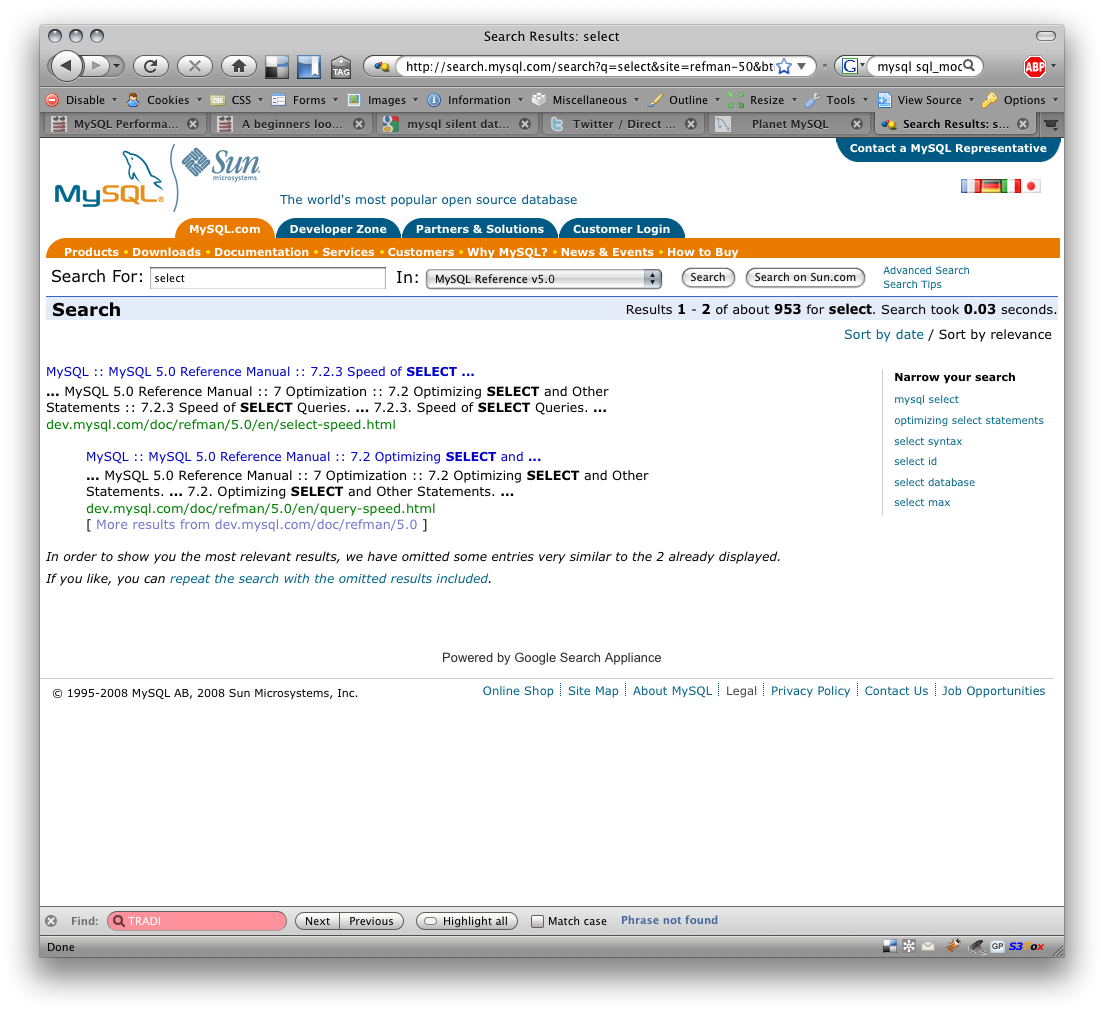

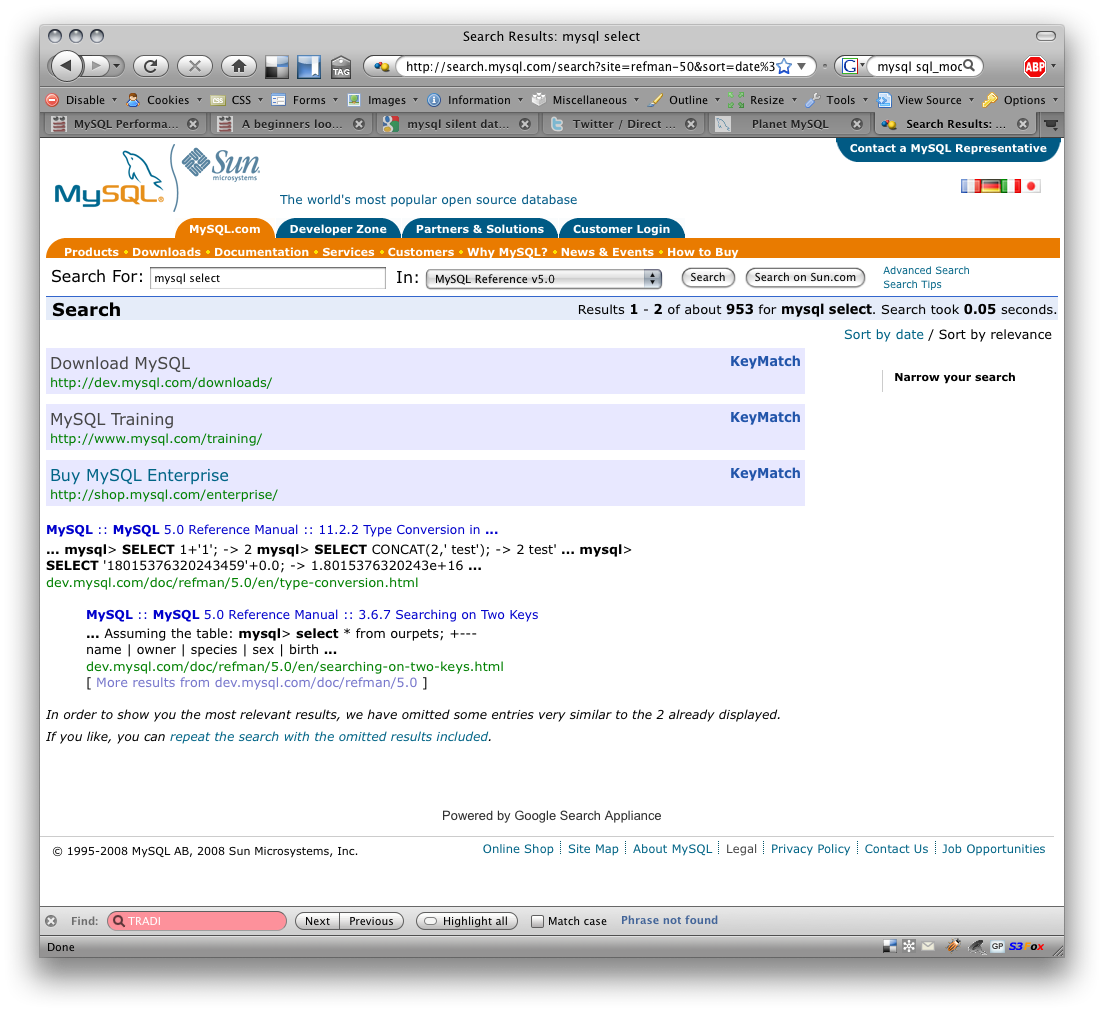

There are several Google search results out there about finding the MegaCLI tools, I found them to be all outdated. There is of course other tools including Dell OpenManage Server Administrator (GUI and CLI) and an Open Source project called megactl.

Here is just a summary of a few lines of each that yields valuable information:

Adapter Details

$ /opt/MegaRAID/MegaCli/MegaCli64 -AdpAllInfo -aALL RAID Level Supported : RAID0, RAID1, RAID5, RAID10, RAID50 Max Stripe Size : 128kB Stripe Size : 64kB

Physical Details

$ /opt/MegaRAID/MegaCli/MegaCli64 -LDPDInfo -aall Adapter #0 Number of Virtual Disks: 1 RAID Level: Primary-5, Secondary-0, RAID Level Qualifier-3 Size:208128MB Stripe Size: 64kB Number Of Drives:4 Default Cache Policy: WriteBack, ReadAheadNone, Direct, No Write Cache if Bad BBU Current Cache Policy: WriteBack, ReadAheadNone, Direct, No Write Cache if Bad BBU Raw Size: 70007MB [0x88bb93a Sectors] Inquiry Data: FUJITSU MAY2073RC D108B363P7305KAU Inquiry Data: FUJITSU MAY2073RC D108B363P7305KAJ Inquiry Data: FUJITSU MAY2073RC D108B363P7305JSW Inquiry Data: FUJITSU MAY2073RC D108B363P7305KB1

Battery

$ MegaCli -AdpBbuCmd -aALL Fully Charged : Yes Discharging : Yes BBU Capacity Info for Adapter: 0 Relative State of Charge: 100 % Absolute State of charge: 88 % Run time to empty: 65535 Min BBU Properties for Adapter: 0 Auto Learn Period: 7776000 Sec Next Learn time: 304978518 Sec

The big detail that was missing was the details in this ouput of the drive speed, such as 7.2K, 10K, 15K. What is the impact? Well that’s the purpose of the next step.

Following this investigation, testing of the RAID configuration with Bonnie++ was performed to determine the likely performance of various configurations, and to test RAID0, RAID1, RAID5 and RAID10.

Further testing that would be nice would include for example RAID 5 with 3 drives verses 4 drives. The speed of the drives, the performance in a degraded situation, and the performance during a disk rebuild.

This still leaves the question about how to test the performance with and without the Battery Backed Cache. You can easily disable this via CLI tools, but testing an actually database test, and pulling the power plug for example with and without would yield some interesting results. More concerning is when Dell specifically discharges the batters, and it takes like 8 hours to recharge. In your production environment you are then running in degraded mode. Disaster always happens at the worse time.