I was again reminded why setting SQL_MODE is so important in any new MySQL environment. While performing benchmark tests on parallel backup features with a common InnoDB tablespace and per file tablespace, I inadvertently missed an important step in the data migration. The result was the subsequent test that performed data population worked without any issues however there was no data in any InnoDB tables.

These are the steps used in the migration of InnoDB tables from a common tablespace model to a per-table tablespace model.

- Dump all InnoDB tables

- Drop all InnoDB tables

- Shutdown MySQL

- Change the my.cnf to include innodb-file-per-table

- Remove the InnoDB ibdata1 tablespace file

- Remove the InnoDB transactional log files

- Start MySQL

- Verify the error log

- Create and load new InnoDB tables

However, step 6 was not performed correctly due to a sudo+shell wildcard issue. The result was MySQL started, and tables were subsequently created incorrectly. What should have happened was:

mysql> CREATE TABLE `album` (

-> `album_id` int(10) unsigned NOT NULL,

-> `artist_id` int(10) unsigned NOT NULL,

-> `album_type_id` int(10) unsigned NOT NULL,

-> `name` varchar(255) NOT NULL,

-> `first_released` year(4) NOT NULL,

-> `country_id` smallint(5) unsigned DEFAULT NULL,

-> PRIMARY KEY (`album_id`)

-> ) ENGINE=InnoDB DEFAULT CHARSET=latin1;

ERROR 1286 (42000): Unknown table engine 'InnoDB'

However, because by default MySQL will fallback to the legacy default of MyISAM, no actual error occurred. In order for this to produce an error, an appropriate SQL_MODE is necessary.

mysql> SET GLOBAL sql_mode='NO_ENGINE_SUBSTITUTION';

A check of the MySQL error log shows why InnoDB was not available.

120309 0:59:36 InnoDB: Starting shutdown... 120309 0:59:40 InnoDB: Shutdown completed; log sequence number 0 1087119693 120309 0:59:40 [Note] /usr/sbin/mysqld: Shutdown complete 120309 1:00:16 [Warning] No argument was provided to --log-bin, and --log-bin-index was not used; so replication may break when this MySQL server acts as a master and has his hostname changed!! Please use '--log-bin=ip-10-190-238-14-bin' to avoid this problem. 120309 1:00:16 [Note] Plugin 'FEDERATED' is disabled. 120309 1:00:16 InnoDB: Initializing buffer pool, size = 500.0M 120309 1:00:16 InnoDB: Completed initialization of buffer pool InnoDB: The first specified data file ./ibdata1 did not exist: InnoDB: a new database to be created! 120309 1:00:16 InnoDB: Setting file ./ibdata1 size to 64 MB InnoDB: Database physically writes the file full: wait... InnoDB: Error: all log files must be created at the same time. InnoDB: All log files must be created also in database creation. InnoDB: If you want bigger or smaller log files, shut down the InnoDB: database and make sure there were no errors in shutdown. InnoDB: Then delete the existing log files. Edit the .cnf file InnoDB: and start the database again. 120309 1:00:17 [ERROR] Plugin 'InnoDB' init function returned error. 120309 1:00:17 [ERROR] Plugin 'InnoDB' registration as a STORAGE ENGINE failed. 120309 1:00:17 [Note] Event Scheduler: Loaded 0 events 120309 1:00:17 [Note] /usr/sbin/mysqld: ready for connections. Version: '5.1.58-1ubuntu1-log' socket: '/var/run/mysqld/mysqld.sock' port: 3306 (Ubuntu)

NOTE: This was performed on Ubuntu using the standard distro MySQL version of MySQL 5.1.

As previously mentioned, SQL_MODE may not be perfect, however what features do exist warrant correctly configuration your MySQL environment not to use the default.

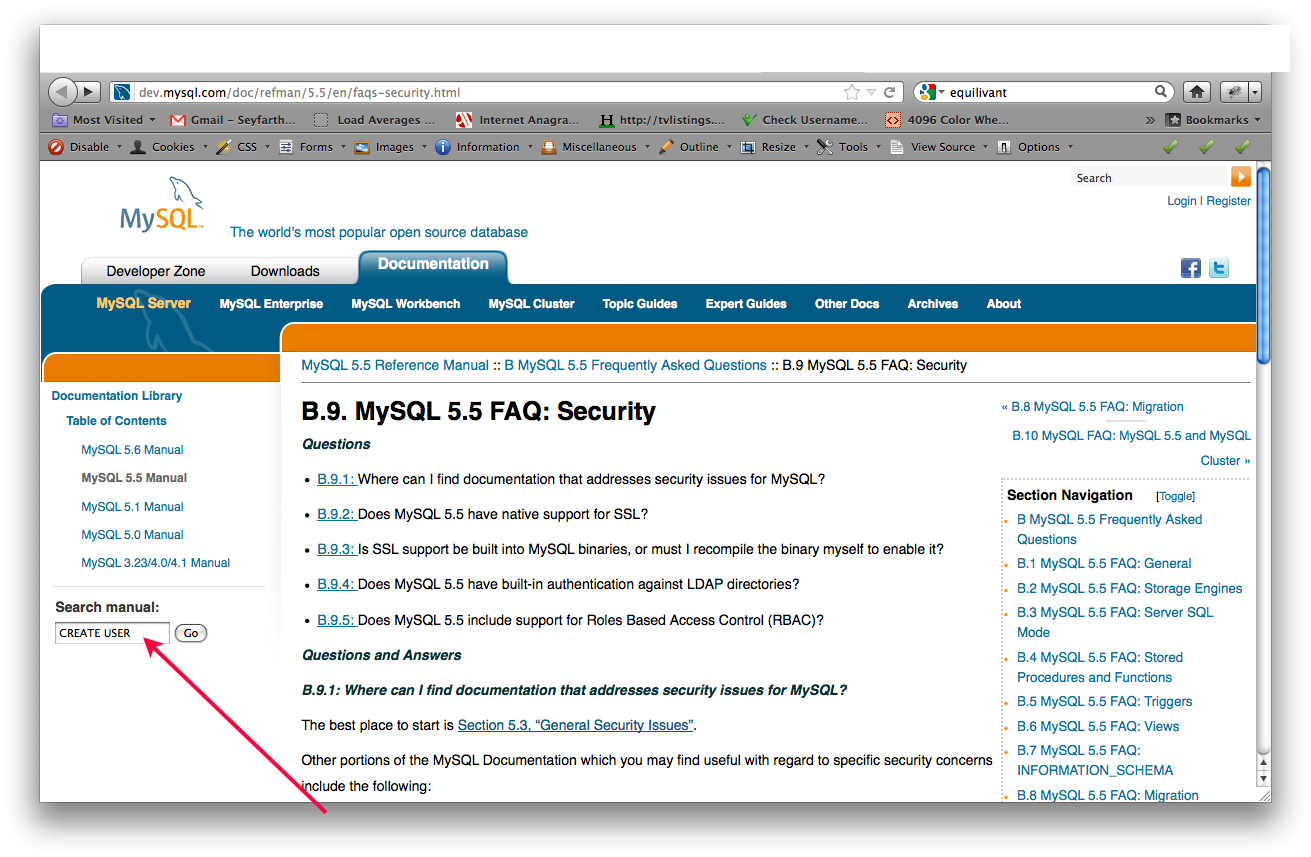

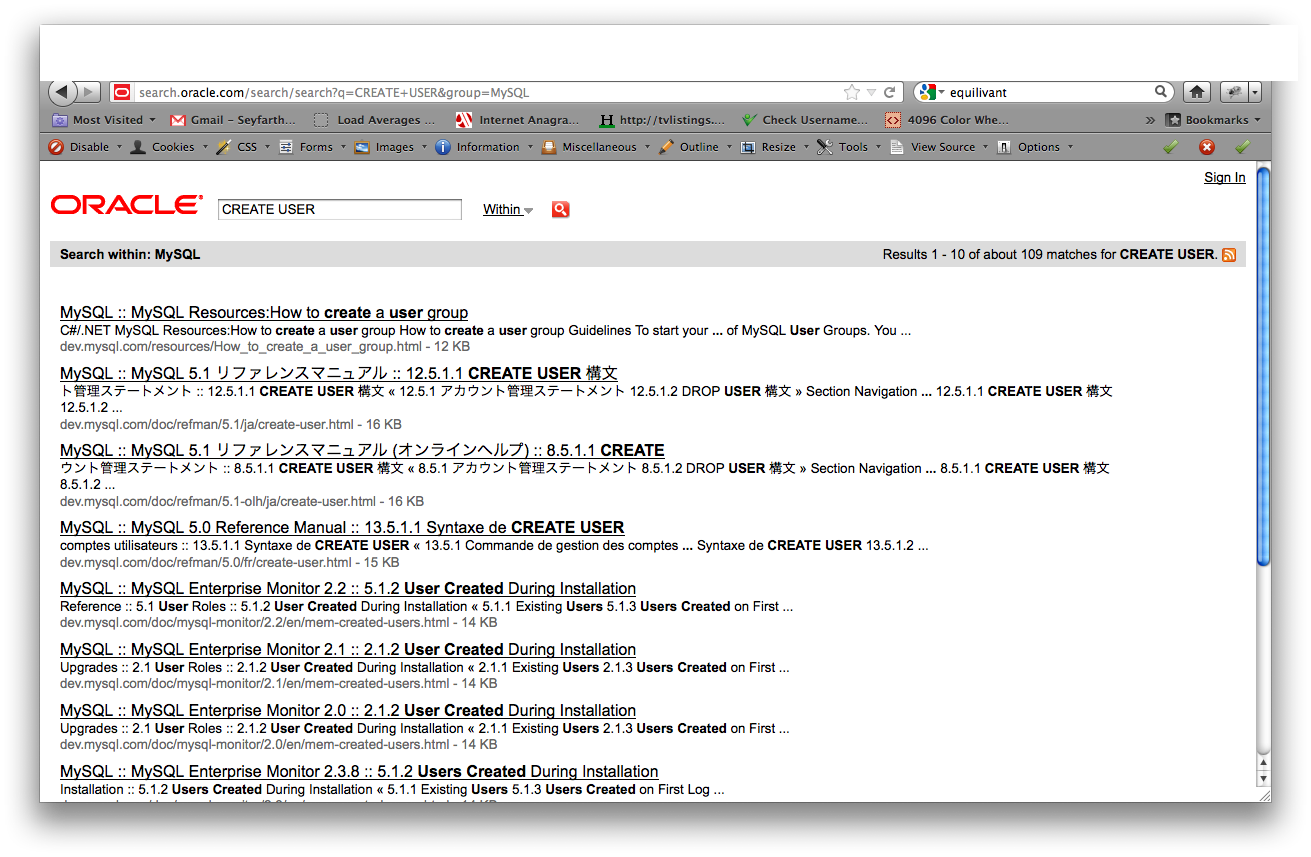

More Information.

Today at the

Today at the